By David Warren-Angelucci, OSS Channel Sales Manager

HPC hardware for AI Workflows on the Edge

The building blocks of an AI workflow are the same as any computational workflow:

While most AI workflows occur in the controlled environment of datacenters where servers have the HPC resources the applications need, many current AI applications require some or all the AI workflow steps to be performed out in the field, in harsh environmental conditions. Until now, companies with applications on the ‘edge’ have had to rely on low-performance hardware or deal with the latency of uploading data to the cloud; rugged edge-computing devices, like industrial PCs and IOT devices, are able to withstand the extreme environmental factors of harsh environments, but they do not come close to offering the same computational performance of servers in a datacenter. Because of this, AI applications on the ‘edge’ have had to compromise on performance, but not anymore!

With our latest line of “AI Transportable” products, One Stop Systems (OSS) supplies rugged appliances which have the same capacity of datacenter performance, but can be used for AI workflows in cars, planes, trucks, ships, drones, and any other environment which has never been able to support HPC hardware…until now. The products in the AI Transportable line are rugged, datacenter-type HPC products that are tailored for each of the four steps in the AI workflow. Companies with edge applications which require the highest performance compute power cannot compromise on performance; they need the components of the datacenter in the field.

With our “AI Transportable” product line, OSS brings the power of the datacenter to the edge!

OSS designs and manufactures high-performance computing systems that are uniquely positioned to support each stage of the AI Transportable workflow, and we have a range of products tailored to meeting the needs of each stage, based on the requirements of the application.

The 4 Stages of the AI Workflow

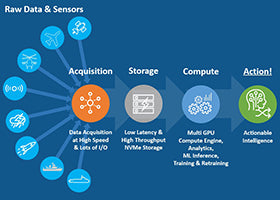

The ultimate goal of the AI workflow is to process raw data into actionable intelligence. OSS provides hardware platforms which expedite AI workflows and significantly reduce the time to take action.

The four fundamental building blocks of an AI workflow include: gathering raw data from sensors and other I/O devices (OSS has products which acquire significant amounts of data at high-speed), storing that data (OSS has products which support high-density storage in a small footprint), computing that data (OSS specializes in providing multi-GPU platforms for high-speed analytics, inference, AI training, and retraining), and then making intelligent decisions based on the knowledge gained from that data.

It supports up to four double-wide add-in cards (like a GPU) and has 16 NVMe/SATA SSD bays in two removeable cannisters. The flexibility and utility of this all-in-one server are matched perfectly with its rugged nature for a wide variety of applications that may need more than only storage or only GPU computing.

It supports up to four double-wide add-in cards (like a GPU) and has 16 NVMe/SATA SSD bays in two removeable cannisters. The flexibility and utility of this all-in-one server are matched perfectly with its rugged nature for a wide variety of applications that may need more than only storage or only GPU computing. It may be overkill for many applications, but for those which require the highest performance storage and GPU computing at the edge, this dual-Rigel solution is unmatched.

It may be overkill for many applications, but for those which require the highest performance storage and GPU computing at the edge, this dual-Rigel solution is unmatched.The Future is Now

The push for supporting AI applications in the field is becoming increasingly evident. Companies are no longer able to accept the compromises that they must make by relying on the time-consuming latency of uploading data to the cloud so that it can be stored and computed in a datacenter before results are transferred back to the field, and traditional industrial box PCs are no longer able to support the intense storage & compute requirements of many AI workflows.

One Stop Systems is the solution -- leading the industry in offering rugged HPC solutions of varying scale for edge AI applications.

Click the buttons below to share this blog post!

The character of modern warfare is being reshaped by data. Sensors, autonomy, electronic warfare, and AI-driven decision systems are now decisive advantages, but only if compute power can be deployed fast enough and close enough to the fight. This reality sits at the center of recent guidance from the Trump administration and Secretary of War Pete Hegseth, who has repeatedly emphasized that “speed wins; speed dominates” and that advanced compute must move “from the data center to the battlefield.”

OSS specializes in taking the latest commercial GPU, FPGA, NIC, and NVMe technologies, the same acceleration platforms driving hyperscale data centers, and delivering them in rugged, deployable systems purpose-built for U.S. military platforms. At a moment when the Department of War is prioritizing speed, adaptability, and commercial technology insertion, OSS sits at the intersection of performance, ruggedization, and rapid deployment.

Maritime dominance has long been a foundation of U.S. national security and allied stability. Control of the seas enables freedom of navigation, power projection, deterrence, and protection of global trade routes. As the maritime battlespace becomes increasingly contested, congested, and data-driven, dominance is no longer defined solely by the number of ships or missiles, but by the ability to sense, decide, and act faster than adversaries. Rugged High Performance Edge Compute (HPeC) solutions have become a decisive enabler of this advantage.

At the same time, senior Department of War leadership—including directives from the Secretary of War—has made clear that maintaining superiority requires rapid integration of advanced commercial technology into military platforms at the speed of need. Traditional acquisition timelines measured in years are no longer compatible with the pace of technological change or modern threats. Rugged HPeC solutions from One Stop Systems (OSS) directly addresses this challenge.

Initial design and prototype order valued at approximately $1.2 million

Integration of OSS hardware into prime contractor system further validates OSS capabilities for next-generation 360-degree vision and sensor processing solutions

ESCONDIDO, Calif., Jan. 07, 2026 (GLOBE NEWSWIRE) -- One Stop Systems, Inc. (OSS or the Company) (Nasdaq: OSS), a leader in rugged Enterprise Class compute for artificial intelligence (AI), machine learning (ML) and sensor processing at the edge, today announced it has received an approximately $1.2 million pre-production order from a new U.S. defense prime contractor for the design, development, and delivery of ruggedized integrated compute and visualization systems for U.S. Army combat vehicles.