Jaan Mannik – Director of Commercial Sales

In my last blog post, What is the Workhorse Advancing HPC at the Edge?, I highlighted how enterprise applications requiring the highest end compute for their AI workloads at the Edge are leveraging data-center grade NVIDIA GPUs to get even greater performance. Processing and storing data closer to where the action takes place means a decision can be made more quickly, producing reduced latency, improved security, greater reliability, and much higher performance. In this blog, I’ll be covering the transition from using big power-hungry GPUs to smaller form factor electronic control units, better known as ECUs, at the very edge.

GPUs have traditionally been the workhorse for training and retraining AI models, thanks to their massive parallel architecture designed for general purpose computing. Training an AI model typically involves processing vast amounts of data through complex mathematical operations to adjust the model parameters, essentially teaching it to make more accurate predictions or classifications over time. Enterprise class NVIDIA GPUs like the Gen4 A100 or new Gen5 H100 are equipped with specialized libraries like CUDA and cuDNN that optimize AI/ML frameworks for GPU acceleration, ensuring that AI developers can take advantage of the full potential of their GPUs.

Retraining AI models is also extremely important for creating better model accuracy and relevance over time. When new data becomes available, retraining is essential to adapt the model to changing circumstances. Retraining AI models typically requires less computational resources than the initial training because the new datasets are smaller and the model is more optimized. This kind of logic can be applied to real life applications as well. For example, think of a baseball pitcher in high school who throws a great fastball (I’m reliving my glory days for a moment). To teach them to throw a curveball, the coach will teach them new way to grip the baseball, so the ball breaks across the plate. The pitcher doesn’t need to be taught how to push off the mound and release the ball toward the catcher because he’s already learned how to do that. It’s a new, small dataset applied to an existing model (or pitcher), which is retrained to throw fastballs AND curveballs.

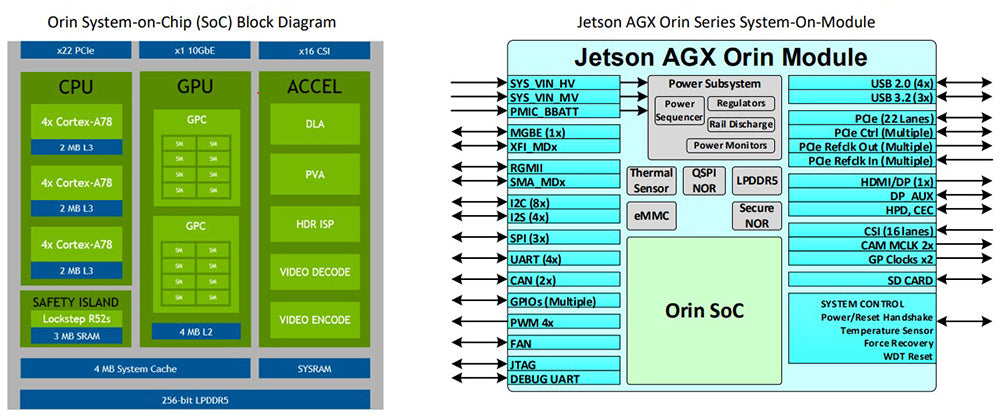

Applying the above logic to seasoned AI models which are ready for production environments at the edge is why the electronic control unit (ECU) is so important. There is still a need for some real-time processing in addition to efficient power consumption, ruggedization, size, weight, and cooling due to the non-traditional environments of edge computing applications. Those big power-hungry NVIDIA© A100 & H100 GPUs used initially to train models in a datacenter are no longer suitable, and can be replaced by specialized ECUs like NVIDIA Orin. NVIDIA Orin modules, such as JetsonTM AGX Orin module, are highly integrated and powerful system-on-chip (SoC) modules specifically designed to address the limitations of non-traditional edge environments mentioned above. Each ECU combines multiple Arm CPU cores, NVIDIA’s custom-designed GPU cores, dedicated hardware accelerators for AI/ML learning tasks, and a variety of edge relevant I/O interfaces into a single compact power-efficient package, making it an idea solution for edge devices with constrained resources. Connecting multiple ECUs together can increase performance and also introduce redundancy, which is extremely important for edge applications like autonomous driving, robotics, smart cities, etc.

In conclusion, the shift from GPUs to ECUs like NVIDIA Orin in edge computing reflects the evolving demands of modern applications. The move towards power efficient, compact, and integrated solutions as been driven by the need for real-time processing, low latency, and AI capabilities at the very edge. As technology continues to advance, ECUs are likely to play an increasingly pivotal role in shaping the future of edge computing.

Click the buttons below to share this blog post!

Modern defense operations increasingly assume one thing: the network may not be there when it matters most. In a cloud denied environment, forces must still collect data, process sensor feeds, support operators, and make decisions without relying on persistent access to centralized infrastructure. In these conditions, communications may be denied, disrupted, intermittent, or severely bandwidth-constrained. The mission continues anyway.

That reality is changing how military and aerospace systems are designed. Architectures that depend on constant reach-back to the cloud or to remote enterprise systems create operational risk when adversaries disrupt communications through jamming, cyberattack, or anti-access and area-denial strategies. In a contested environment, success depends on the ability to move compute, storage, and analytics directly to the edge.

Key takeaways from NVIDIA GTC 2026 and what they mean for rugged edge computing, real-time AI systems, and edge AI deployment.

The character of modern warfare is being reshaped by data. Sensors, autonomy, electronic warfare, and AI-driven decision systems are now decisive advantages, but only if compute power can be deployed fast enough and close enough to the fight. This reality sits at the center of recent guidance from the Trump administration and Secretary of War Pete Hegseth, who has repeatedly emphasized that “speed wins; speed dominates” and that advanced compute must move “from the data center to the battlefield.”

OSS specializes in taking the latest commercial GPU, FPGA, NIC, and NVMe technologies, the same acceleration platforms driving hyperscale data centers, and delivering them in rugged, deployable systems purpose-built for U.S. military platforms. At a moment when the Department of War is prioritizing speed, adaptability, and commercial technology insertion, OSS sits at the intersection of performance, ruggedization, and rapid deployment.